Azure Hybrid Cloud Design: VPN vs ServiceBus

Microsoft Azure services allow us to deploy workloads that can process custom business logic, workflows, integration services and data – and much more. However, most companies have systems and data that runs on on-premises servers and devices that need to interact with the cloud components.

There are several ways to integrate with your on-premises systems. Let’s take a look at some options and their design implications.

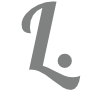

VPN Based Integrations

We can create a VPN connection from your server or network to Microsoft Azure. This way, traffic can be routed from Azure to the on-premises resources and reverse.

A Point-to-Point (P2P) connection or a Site-to-Site (S2S) connection can be configured using standard IPSec. The S2S setup would make more sense from an enterprise IT perspective, as it routes traffic for a group of servers instead of individual machines. We would recommend to set up redundant connections for reliability. This means deploying a load-balanced router with IPSec support that connects to dual VPN gateways with failover in Azure. Once we have the VPN in place, then we can connect from Azure to the on-premises server resources.

We can also use ExpressRoute as an alternative. This way, we have a dedicated network channel to Azure that does not go over the public Internet. Traffic is not encrypted but instead isolated and provides much better latency and throughput than a VPN setup. From a cost perspective, a small service would cost in the region of 1500-300 euro per month depending on speed, and can be compared to having an additional internet service connected to your office location. For redundancy purposes, you should still set up an S2S VPN as a failover.

We run code inside an App Service or Function that interacts with our on-premises servers. Some considerations of this model:

- Contains logic inside the App Service or Function using custom code

- Can expose its endpoint to callers via a REST API

- Requires deployment of “proxy code” that must be maintained

- Requires setup of virtual networks and constant connection to the on-premises network

We could also use the gateway feature of the API Management Service and publish a REST API

- Decoupled business logic code from transport

- Reverse proxy allows changing backend service based on message format, headers, version and more

- No need for “proxy code”

- Preferred solution if a steady connection exists

- Still requires setup of virtual networks

From a design perspective, I would recommend to decouple the business logic from the transport layer. This way, we can replace different components of the integration with new technology in the future without having to redesign the entire application. The API gateway would expose a service contract over a secured API endpoint and could be private. Other systems or components would call the API gateway, and be unaware of the complexity behind the endpoint.

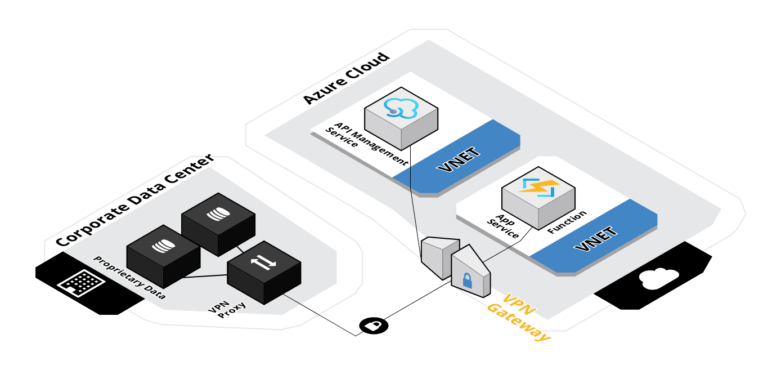

ServiceBus Based Integration

As an alternative, we can use Azure ServiceBus. This is an integration service that works with both queues and hybrid relays. A queue is a mechanism that puts messages on a first-in-first-out (FIFO) queue and a receiver pulls messages off the queue list. The remote server can trigger on messages being added and does not require a constant connection. However, this does require an asynchronous design pattern.

An alternative would be to use Hybrid Relay. The service creates hybrid connections that use remote listener services, deployed on the on-premises servers, to create a redundant set of private channels. This does not require any VPN setups and if you design for redundancy, then you can support synchronous calls with error handling.

Hybrid Relay can run in different modes. We can register the remote listener service using the Hybrid Relay Connection Manager. This way, the service works as a proxy between the caller and the target and forwards the request to the intended endpoint.

We can also use the Azure Function Proxy feature to create an array of on-premises connections and route traffic to different data centres based on custom business logic.

- The relay manager must have network access to the target server, and it is recommended to place the service in a separate DMZ/subnet with a firewall and a zero-trust policy

- Good alternative if the endpoints on-premises changes frequently

- Requires code in Azure Function or App Service within Azure to set server targets

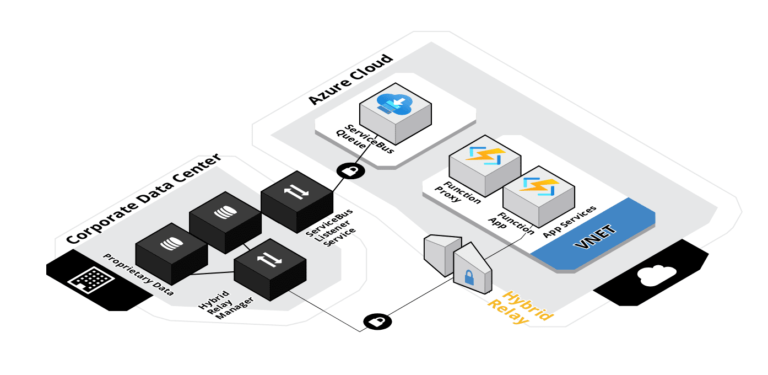

Yet another alternative is to use the Hybrid Relay library in a custom .NET service. This way, our custom service replaces the Hybrid Relay Manager, and performs custom business logic as well as opens a private channel. The appeal of this model is to move business logic away from Azure to the on-premises source system and let Azure deal with transport and integration. The effects are:

- Does not require using custom apps, functions or code in Azure

- Can trigger from HTTP calls in Azure, for example a Logic App

- Custom service receives the entire HTTP message, and can respond depending on query parameters, POST body etc

Again, my recommendation is to decouple transport and integration contracts from business logic. I would prefer to place custom code close to the source system, as this makes it easier to manage responsibility between system boundaries. The complexity is moved outside the consumer (Azure) system, and we can deploy all cloud resources using declarative configuration and zero code.

The Importance of Robust Architecture Blueprints

There are always pros and cons to all designs. Hybrid scenarios have an overwhelming amount of alternatives. It is important to do a complete analysis of your business drivers, technical constraints and to design a robust architecture that can grow and adapt for years to come.

If you are interested in getting support for your hybrid cloud architecture initiative, take a look at our Architecture Planning & Design service offering or contact us for more information.